Abhishek Gupta; Ameen Jauhar; and Nga Than

I. INTRODUCTION

The governance of social media platforms has increasingly become an important topic of debate and scrutiny[1]. Given the highly dynamic, volatile, and transnational nature of these digital platforms, governments and other stakeholders such as civil society organisations and citizens struggle to figure out effective policies to manage and govern such multinational organisations. At the heart of this debate are concerns over users’ personal data, which are often accumulated by platforms over time, yet platforms are often not in a position to protect users’ personal data. What is often observed in practice is the exploitation of users’ data for profit gains. This sometimes constitutes a violation of individual users’ information privacy. Safeguarding users’ privacy, thus, has been a central point for researchers, legislators, and policymakers working in the domain of informational governance.

We situate our research against a backdrop where tech companies increasingly lobby governments in the Global North to keep privacy regulations lax, the heated debate among Indian citizens about privacy rights and how to operationalise different privacy principles with respect to different demographic groups (such as ethnicity, age, gender, religion, etc.). Further, at the user level, informational privacy is embedded within specific socio-cultural and political conditions, which in the Indian context poses many challenges to individuals in exercising their rights, and for companies to tailor their own policies. For example, despite religious affiliation and caste being sensitive personal information, our survey (discussed later in this paper) revealed that many Indian citizens are comfortable with its disclosure; yet, it is financial information (including simple things like PAN number, or bank account numbers) which are considered much more sensitive. Many of our participants highlighted it as the one bit of personal information they will never share while being comfortable sharing sensitive demographic information. Another example is one’s gender identity, a protected category by many countries’ laws, which could be identified on Facebook. Even though the company announced in 2021 that it would not allow advertisements that target sexual orientation[2], this information might be used against them depending on the local contexts. It is thus important to investigate how individuals exercise informational privacy ‘on the ground’, in the context of India.

In India, the Supreme Court read an individual’s right to privacy as part of Article 21 (the fundamental right to life and liberty), in its landmark judgement of K.S. Puttaswamy v. Union of India.[3] While acknowledging the right to privacy as a fundamental right, the Supreme Court categorically utilised personhood and autonomy to define what the right to privacy entails. This recognition of individual autonomy, as an inextricable part of the right to privacy, is crucial[4] – as legal researchers and constitutional commentators have since argued – as it affords an individual the right to determine when, how, and what kind of information about her can be shared or used by a third person or entity.[5]

Stemming from this recognition of the fundamental right to privacy, the larger discourse on data protection culminated in the introduction of the Personal Data Protection Bill, 2019 (“PDP Bill”) in the Indian Parliament.[6] The PDP Bill was the product of a year-long, extensive deliberations of a government appointed committee of experts, chaired by former judge of the Supreme Court of India, Justice (Retd.) B.N. Srikrishna (“Srikrishna Committee”).[7] It balances interests of the data principal (also known as the data subject), with those of Big Data, a collection of complex data sets that allow for data mining, data linking, and making connections between diverse data[8], and companies relying on such datasets within a growing digital economy in India. In pursuit of safeguarding rights of data principals, the PDP Bill, among other steps, prescribes clear standards for providing notice and obtaining informed consent from individuals whose data is being collected.[9]

Therefore, an emerging body of scholarship from India focusing on data protection and informational governance shows that it is necessary to preserve a user’s agency regarding the collection, storage and usage of her data.[10] However, the outrage over WhatsApp’s alteration of its privacy policy presents a scenario where such informational autonomy is arguably unilaterally compromised. In January 2021, WhatsApp users woke up to a notification informing them of significant updates to the existing privacy policy.[11] In India, seemingly the most contentious perceived change was the unequivocal disclosure of how WhatsApp users’ meta-data was being freely shared with its parent company, Facebook. Pertinently, Facebook over the past few years has increasingly found itself in a wide range of scandals and allegations for violating basic privacy norms, peddling personal data of its users to third parties, and also deceiving its users to part with excessive personal information thus depriving them of their agency.[12] The January 2021 update also presented a take it or leave it scenario; it informed users of the platform that failure to consent to these updates would result in the deactivation of their accounts from February 8, 2021.[13]

The entire saga drew widespread criticism of the sway that Big Tech holds on individual data and personal information, the questionable manner in which it compels users of such platforms to consent to unbridled data sharing or risk losing access to the platform. The incident also prompted questions on whether these issues can be resolved through the proposed PDP Bill,[14] and whether ‘Big Tech’ can be reined in. Consequently, given the immense backlash on these proposed changes, WhatsApp deferred the implementation of this policy.[15] It is pertinent to mention here that at present there is an ongoing investigation ordered into WhatsApp’s functioning triggered by this change in privacy policy, being conducted by the Competition Commission of India (“CCI”).[16] Additionally, having passed its self-assigned deadline of May 15, WhatsApp was also put on notice by the Indian Government of potential punitive action against it, as it attempts to enforce its changes to terms of service, and privacy policy.[17] Clearly, WhatsApp’s attempts to alter its terms of service and privacy policy have an uphill battle to succeed.

Against this background, the authors have considered undertaking the present research with the objective of better understanding qualitative perspectives of social media users in India on issues of data protection and informational privacy. While the PDP Bill aims to impose certain regulatory checks and balances on the collection and use of personal data, it is a concern whether current and prospective legislation aligns with, or even accounts for such privacy perspectives of Indian citizenry. The PDP Bill fundamentally aims to govern the collection of usage of data, while preserving individual autonomy over the same. It aims to give legislative protection to the right to privacy that has been established by the Supreme Court in Puttaswamy. As such, it is likely to become the cardinal legal instrument for preserving informational autonomy of Indians. Such laws would be better informed when supplemented with empirical study, evaluation, and incorporation of the public’s perspectives, through robust stakeholder engagement and participatory lawmaking, rather than relying on anecdotal experiences alone.[18]

These perspectives may also present more local nuance and context to more common notions of privacy. For example, our survey and follow-up focus groups for this paper, which is discussed in great detail subsequently, established that financial information (like annual salary, bank details, etc.) seemed to be far more important to a majority of Indian social media users, than perceived personal information like religious beliefs, political affiliation, and even email addresses. We posit that our mixed-methods research combining insights from survey data, as well as interviews help researchers understand what is important in users’ daily practices of data privacy protection. In light of this, legislators should pay greater attention to whether all forms of individual information should be afforded equal priority or if financial information should be safeguarded first and foremost. Thus, we believe that these perspectives are likely to provide more evidence-informed decision-making, and replace assumed ground realities. Furthermore, evidence-based public policy-making also advocates for the basic idea of participatory governance that is integral to the ethos of liberal democracies.[19]

This paper is structured into three main segments; Section II discusses the problems in the existing formats of Terms and Conditions (“T&Cs”) and privacy policies, deriving inputs from existing literature on this issue, as well as our own empirical research. We examine existing literature on users’ behaviour towards informational privacy on social media platforms, including a determination of how prevalent the ‘privacy paradox’ is among Indian social media users. The privacy paradox refers to the differences between “individuals’ intentions to disclose personal information and their actual personal information disclosure behaviours”.[20]

In other words, people may claim that privacy matters, but there are extraneous circumstances which might cause them to act otherwise, such as disclosing personal information for gaining access to services that provide convenience (saving passwords across devices), sharing personal information in exchange for promotional offers, sharing location information on social media when travelling, among other such behaviours. For instance, empirical research has shown that due to an informational overload, many people do not even skim through privacy policies that are typically published by most digital service providers.[21]

Section III will be a commentary on our empirical research on privacy perspectives of Indian social media users, identifying our methods, and discussing our key findings and analyses. To the best of our knowledge, within the Indian context, little similar empirical research has been undertaken in the past.[22] Most recently, a 2019 survey undertaken by CUTS International asked Indian Internet users about their privacy and data protection practises for all digital technologies.[23] The CUTS survey presents a demographic analysis of how different populations in India interact with digital technologies. Within this larger framework, it further examined some elements of data sharing practises, and its perception by users of different digital technologies. While the CUTS survey brings valuable empirical insights on issues like digital access and digital literacy amongst Indians, its inputs, specifically for informational governance, are limited given its broader scope of engagement. It is pertinent to mention that even with its limited set of questions, the CUTS survey demonstrates to some extent, the existence of the privacy paradox among Indian consumers. For instance, the survey finds that 90% of users knew that the right to privacy is a fundamental right, and 70% advocated better awareness around it. Yet when it came to their individual measures, only 11% of the people surveyed, stated that they actually read privacy policies of different digital services. This behavioural contradiction between what a user aspires to versus her actual action, is the essence of the privacy paradox.

In comparison to the CUTS study, which broadly focused on all kinds of users of the Internet (or other digital services) in India, our research is more targeted. It puts the microscope on a more precise set of subjects, i.e., social media users. The specific focus is in the context of an increasing debate around social media platforms’ practices of data collection and data sharing, and the risks it entails to individual privacy. We found it vital to deep dive singularly into its users, and examine their perspectives of existing data collection practices, the limitations therein, and identifying their expectations of government regulators for such platforms.

Using a mixed method research design, taking advantage of both a quantitative survey, and in-depth interviews, we generated insights that would not be possible to obtain from a single approach.[24] We first conducted a national survey through social media platforms, and Amazon Mechanical Turk, which also asked participants if they wanted to participate in the follow-up interviews leading to 13 in-depth interviews.

Our research examines consumers’ privacy behaviours and attitudes from two angles: users’ privacy preferences, and platform design. First, we conducted our own survey to examine Indian consumers’ privacy attitudes and behaviours for four main social media platforms: Facebook, YouTube, Twitter, and LinkedIn. These platforms were chosen based on the Alexa web traffic rankings as the most visited platforms on the web in the Indian geographical region.[25]

Importantly, we examine the power differential between the social media platforms and their users, with the latter largely holding tokenistic agency over their personal data.[26] This power imbalance is manifested in standardised contracts in a take it or leave it stipulation. Users may either choose to accept all terms and conditions (including broad access to their personal information for processing and sharing), or risk being excluded from the ecosystem of a dominant social media platform. This imbalance is perilous to the notion of “informed consent” and informational autonomy. In fact, some legal analysts have gone on to suggest ‘data collection practises’ as a test for assessing abuse of dominance by Big Tech.[27] We examined the perceptions of users on how they feel about this imbalance, and if the option of “switching platforms” is actually possible, or merely a legal gimmick offered by the more dominant platforms, recognising that their users won’t make a switch to a smaller, more obscure service.

Finally, we conclude in Section IV with suggestions on future empirical research needed on informational governance in India. In the analysis, we specifically focus on the power imbalance between social media platforms and users by examining T&Cs, including privacy policies, to assess and evaluate the dynamics between different actors including users, government, and platforms. Terms of Service (“ToS”) can be both empowering or disempowering to users. This was also brought up in the quantitative and qualitative surveys, expressed in the form of a lack of agency over one’s personal data and a reliance on social media platforms for functioning within society. Our goal is to understand ‘privacy in context’,[28] whereby we aim to get a better understanding of those dimensions of privacy that are the most relevant in the relationship between social media platforms and their users. This is different from the broadest notions of privacy as it just focuses on the informational aspect and invests in understanding why and how users choose to engage on the platform with respect to their privacy.

II. CHALLENGES TO UNRAVELLING TERMS AND CONDITIONS AND PRIVACY POLICIES

In this section, we will outline the relevant literature concerning our research question “what are the perspectives of Indian social media users regarding privacy policies and terms & conditions commonly used by such platforms?”. From the perused literature, we have identified some key issues which are discussed hereinafter.

Terms and Conditions

T&Cs have existed since the beginning of service offerings. They serve as a legal instrument to bind users interacting with a service so that the provider has protections against misuse of the services; for example, by automating scraping of the content from the service which might degrade the performance and availability of that service for other users.[29] While some services might have had skeletal T&Cs in the early days of their operations, with the emergence of templates online, we have seen the rise of fleshed out T&Cs even for nascent services. More mature services with large user bases have more detailed and well-articulated documents that can be quite restrictive for users. They are also more burdensome to the end-user who is ultimately supposed to read them before providing consent. Aleecia McDonald and Lorrie Cranor,[30] show that this might not be feasible given the rising number of services that people use during the course of their daily operations and the increasing length of these documents.[31]

Privacy Policy

A parallel and sometimes separate document is the privacy policy that delineates further how the personal data of a user might be collected and used by the service provider, and potentially even third parties. This is another legal instrument that establishes a legal contract between the user and the service provider.[32] While the T&Cs talk largely about interaction on the platform and what constitutes appropriate use, the privacy policy is more specific in its focus. Sometimes it is present as a separate document for which the service provider obtains consent from the user but in other cases, it can be embedded as a part of the T&Cs in which case the user provides their consent to the entire document including the privacy policy. The T&Cs are subjective with variations emerging from the differences in how the companies want to control the interaction of users on the platform based on the functionality that they offer. Privacy policies are typically more consistent across platforms since they have to, at a minimum, adhere to the local and national data laws for the jurisdictions within which they are operating.[33]

Clickwrap Agreements

In most cases, the use of the service is contingent on the binary acceptance of the entire T&Cs (including the privacy policy). These clickwrap agreements capture the consent of a user in the form of a single yes/no choice before they are allowed to proceed with downloading, installing, or using a service.[34] Clickwrap agreements also codify and legitimise the collection of personal data from the user, further motivating the need to study how such agreements shape the power dynamic between social media platforms and their users.

That is, to use the service there are no gradations in terms of which pieces of data or which conditions the user might be comfortable with and perhaps alter their experience on the service. Users must choose between either having access to the service when they accept all the conditions or no access to the service at all in case they choose to deny consent. Even if the objections might be to a particular part of the document and not to all the provisions, some of which might be reasonable and in alignment with the expectations that the user has when they intend on using the service, the user doesn’t have graduated choices at the moment and are only offered binary alternatives.

At the moment, these T&Cs and privacy policies are tedious, placing the onus on prospective users of being able to adequately parse them,[35] and provide their informed consent prior to using the service. Past studies have demonstrated how little time users spend engaging in such an exercise[36] and how they arguably forgo their agency and choice over their privacy and data rights in favour of gaining expedient access to the service.[37]

Design Patterns in the Presentation of Agreements

Today, the presentation of these T&Cs (and privacy policies) is done in the form of a pop-up at the initial stage of the user interacting or signing-up with a service. The pop-up itself is typically structured as a long notice rife with legalese,[38] or a cursory presentation of the document and the remaining document being placed in a rarely accessed part of the service further discourages active and informed engagement with these documents by the user. This is exacerbated by the fast-paced interactions that users are accustomed to with internet services, where they face an information overload and fatigue[39] that incentivizes actions that are ill-informed and mostly dismissive in nature so that they can get to the service that they are signing up for.

What this means concretely in the case of T&Cs and other such agreements is that users rarely pay any attention to the information that is shared with them and jump right ahead to acquiescing to anything that is asked of them to get them to be able to use the service.

The other problem with this methodology of presentation is that over time, the user is likely to forget what the conditions of use of their information are and how that might affect their experience on the service. In fact, as our survey and focus groups revealed, users felt that without a persistent reminder, there is the risk that users might be cautious in their use of the service in a manner consistent with their own values in the beginning and perhaps avoiding some of the negative consequences that might arise from how their data might be abused, but over time they drift into behaviours that are perhaps more ‘natural’ to their way of engagement that runs afoul of their implicit values and desires of wanting to protect their information because the implications of the T&Cs (and privacy policies) have faded into the background and their current placement after initial consent are such that they are inaccessible.

Violating Informed Consent Principles

This becomes a particular concern especially when we talk about the ‘informed’ part of informed consent.[40] As held by the Supreme Court in Puttaswamy, informed consent is a sine qua non as far as agency and control, over one’s data, is concerned.[41] For example, what the users understand they are consenting to might be different from what they are actually consenting to because of misunderstanding[42] on their part, which might arise due to a cursory reading of the document, complexity of the language of the documents, or the presentation format of the material that actively discourages the thorough perusal of the document. In fact, as Bechmann argues, consent and choice take a backseat to the social value of such platforms, where users often forego control in order to be part of this modern-day social club or online frat house.[43]

Furthermore, design techniques are also often deployed by social media platforms to discourage a more keen scrutiny of the T&Cs. ‘Dark patterns’[44] are a well-known technique that is employed by service providers to nudge user behaviours. These can take the form of nudging and eliciting different behaviours like continuing to watch videos one after another. They can also nudge people away from certain behaviours, such as preventing the navigation away from a page by popping up a limited time offer, or in this case, presenting the documents in such a disengaging way that the user is discouraged from reading through it and thus just consenting to it to get access to the service but not being well-informed about the consequences of their consent.

Static Nature of Current Presentation of Policies

The privacy controls are limited in the sense that once the user has already consented to the use of their data through the initial consent to the document, their extent of control diminishes largely to only the options that are offered by the platform pursuant to its own choices. These choices are often motivated by commercial interests and don’t take end-user welfare into account as much. Numerous occasions have shown that T&Cs and other such documents are subject to changes that might come as a surprise to users[45] [46] [47] reminding them of the considerations that they made when they first signed up for the service. This reiterates one of the fundamental flaws in the current consent acquiring mechanisms whereby the burden for consent is placed upfront and obtained under manufactured duress that forces the user to quickly accept the T&Cs (and privacy policy) so that they can proceed to using the service, an idea that is aptly captured by the term clickwrap agreements.

So what are some changes that can be made to address this? It is pertinent to mention that not only must timely and periodic reminders be issued to users regarding the platforms’ data collection and sharing practises, the same should be done in a conspicuous, easily accessible, and lucid format. The idea is to be as transparent as possible about these practises in order to ensure that consent given by a user is up to date and meaningful. For example, the Signal app provides spaced-out reminders for the PIN that is set to secure the app so that users don’t forget a crucial piece of information. This is done in a non-intrusive manner making the action a useful addition to the user experience on the service. Spaced repetition is a well-known method for transferring information from short- to long-term memory.[48]

The most pertinent impact on the rights of the users is that they are perhaps caught unaware that some of their legally protected rights might be violated in the way they engage with the platform. This comes with the realisation that the T&Cs (and privacy policies) are structured in a way that does thread the legal needle but veers towards the use of personal data in a way that might violate the expectations of the user and perhaps, more importantly, through complex downstream workflows and recharacterisations of the data, violate their rights which are hard for the average user to detect and bring to light. This structuring of the T&Cs and the privacy policies exacerbate the power imbalance by stripping away agency from the user to the social media platform that not only has home-ground advantage, but they also set the rules for playing the game, and rig those in their favour, namely in creating the mechanisms to facilitate unfettered access to personal data.

The current state of policy analysis looks at this issue in a static manner; the analysis is focused on what the consent and privacy settings allow the social media platform to do and what agency it confers on to the user. But, given the interconnected nature of the data ecosystem in the modern world, we risk overlooking adherence to the law in letter but not in spirit as data is whisked around through different services, being recombined and utilised in ways that are opaque to the users and legislators. The legality is also difficult to analyse given both the opacity and multitude of jurisdictions through which the data might pass.

When it comes to the rights of the users, the lack of awareness of their rights[49] only exacerbates the problem further because they don’t have the ability to detect areas where there is a potential for their privacy and data rights to be violated.

III. DATA AND METHODS

This paper is based on research conducted between November 2020 and January 2021. Our analysis relies on a survey, and 13 focus-group interviews with people who used different social media platforms on a daily basis. Interviewees were recruited from the respondents of the survey, which was a non-random sample of 149 social media users sourced through snowball sampling.

We determined that to gauge how people find the existing T&Cs (and privacy policies) difficult, we must explore whether or to what extent everyday users read these policies, and the amount of time they spent on such activities. We designed a survey to ask questions such as how often users read terms of service, whether they think reading terms of service matters, how accessible terms of service are to them, and what specifically in the documents are confusing or creating barriers for them. Furthermore, through in-depth interviews, we were able to further examine users’ concerns, their feeling of being in control, and their proposals on how to improve the T&Cs.

We began our research with a web-based survey designed to reach a broad range of social media users in India. The survey focused on users’ experiences with four platforms: Facebook, Twitter, LinkedIn, and YouTube. Facebook is the paradigmatic social media platform, while Twitter has gained some traction in India recently. LinkedIn is a social media platform for professional networking, while YouTube is mainly for video sharing and entertainment. It is pertinent to mention here that we did not include WhatsApp specifically in our list of social media platforms. However, around the time of our focus group discussions (discussed subsequently), due to large public outcry and media reportage on its decision to change user policies, our interviews in the focus groups did involve discussions around it.

We recruited social media users to take our online survey in two ways. First, using snowball sampling[50] through our own social networks, and social media networks, we were able to collect 91 survey responses. In order to broaden the range of respondents in terms of geography, and socio-economic backgrounds, we utilised Amazon Mechanical Turk (“Mturk”), a crowd-sourcing platform that enables researchers to collect survey data through a diverse pool of participants. This tool has become popular with researchers in many disciplines including computer science, linguistics, political science, and sociology. However, the tool was designed to be used by researchers from the Global North while Mturk survey participants came from the Global South, especially from India.[51] In total, we got 81 responses from Mturk, and after checking for quality of responses and data cleaning, we ended up with 60 responses for final analysis. In total, we have 149 responses for analysis in the final dataset. This dataset is a smaller sample than the CUTS study, which surveyed approximately 2100 respondents. This is attributable to our small team of researchers, conducting this empirical study remotely due to the pandemic, and without any significant financial aid to undertake the study. However, in order to enrich our survey inputs, we conducted detailed qualitative inputs from our participants, elaborated herein subsequently.

Given that our research team included predominantly English-speaking individuals, we targeted the English-speaking population through both phases of recruitment. Our sample of survey respondents is not representative of the population of social media users in India as a whole. Given the lack of empirical data and research concerning individual understanding and experiences of privacy in India, our research provides an important first step and description of social media users’ experiences in the Indian context.

The last question in our online survey inquired whether the respondent would be interested in participating in a follow-up group interview. Among the 149 people who participated in the survey, 78 people indicated that they would be interested in participating in the follow-up group interviews. We then contacted them in January 2021, and conducted 3-group interviews of 13 participants. Each interview lasted 90 minutes on average. In order to protect respondents’ confidentiality, we use pseudonyms throughout this paper.

A. Descriptive Statistics

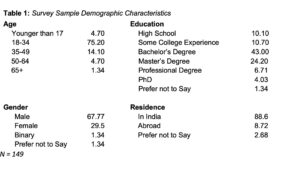

The majority of respondents were young adults between the ages of 18 and 34 (75.20% of the respondents) and resided in India (88.6%). Most identified as male (67.77%), having a bachelor’s degree (43.00%).

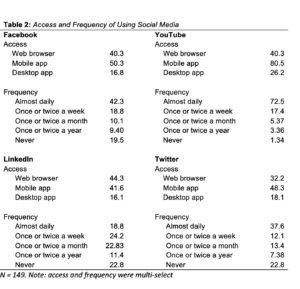

Our respondents frequently used the four platforms on a daily basis, except for LinkedIn. Specifically, 72.5% users visited YouTube, and 42.3% of users visited Facebook on a daily basis. Only 37.6% of the users said the same thing for Twitter; and 18.8% for Linkedin. Twitter and LinkedIn were the least frequented, with 22.8% of users saying that they never visited both platforms. Most people access different platforms from either mobile phone apps or via web browsers; few also access the services through apps downloaded on their desktops. Notably, most users accessed YouTube (80.5%), Facebook (50.3%), and Twitter (48.3%) via their mobile phones. Further, users accessed LinkedIn the most through web browsers (44.3%).

Our survey respondents use these different social media platforms for various reasons including keeping up with friends and family, getting entertainment, being informed about social events, learning about other countries, getting job opportunities, running their own businesses, getting customers, and feeling a part of a network.

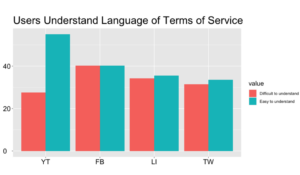

Regarding whether the survey respondents have read the platforms’ terms of service documents, the respondents provided mixed results. Among YouTube and Facebook users, there were more users who had read the ToS documents than those who hadn’t. 51.0% of YouTube users, and 47.0% of Facebook users said that they read ToS. By contrast, there were more LinkedIn and Twitter users who have never read ToS documents than those who have read the documents. 44.3% of LinkedIn users and 42.3% of Twitter users said that they did not read ToS. Similarly, the results are also mixed when it comes to whether users found the language of ToS to be accessible. Notably, 55.0% of YouTube users found its ToS to be accessible linguistically (Figure 2). An equal percentage of Facebook users found the language to be accessible and inaccessible (both are 40.3%).

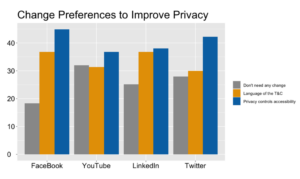

When asked what changes they would prefer to see to improve their privacy experiences on social media platforms, most users wanted to have more control over their privacy settings on the platform. Notably 44.9% of Facebook users preferred privacy controls accessibility. 38.1% of Twitter users also preferred privacy controls accessibility. 36.7% of YouTube users, 29.9% of YouTube users, 31.3% of LinkedIn users, and 36.7% of Twitter users prefer to have more accessible language in the T&Cs.

The survey results show that respondents perceived different platforms differently when answering the questions on whether they have read each platform’s T&C, and whether they thought that the T&C language was accessible. In order to understand their choices, and how they compared different platforms, we used in-depth interviews to explore various aspects of privacy practices which we could not solicit through the survey.

B. Differences in Privacy Experiences

In this section, we provide survey answers to the question: “What does information privacy mean to you?” We wanted to understand how users define privacy, and how their conception of privacy translates to their daily practices. The following analysis relies primarily on survey data, whereby respondents were anonymous.

Users provided us with differing conceptions of privacy. To some, “information privacy” pertains to “personal data stored on computer systems.” Privacy could also mean “my personally identifiable information available in the public domain.” These definitions of privacy were relatively general. Others were more critical of the current model of self-governed privacy- social media companies allowed certain privacy control settings in the platform interfaces, where they could hide personal information from the general public, and the content they published to the world, i.e., to their social networks of neighbours, friends, relatives, and co-workers. However, their other personal information such as their taste, location, network, and demographic information were used by the platforms for commercial purposes such as building the next machine learning and artificial intelligence systems, or being packaged, and resold to other companies and individuals. Platforms’ use of the data is vaguely described in T&C documents, and privacy policies which are subject to change.

To these individuals, “information privacy” dealt with “the ability an organisation or individual has to determine what data in a computer system can be shared with third parties.” It is “the right to have control over how personal information is collected and used.” In other words, privacy encompasses all of their data, what and how this information is being shared to any third party.

In our focused discussion groups, users agreed that under the current, inadequate model of self-governance, users only have a few options to protect their data. The first option is to opt out of social media altogether, which seems unlikely at a population level. The second option is to utilise privacy controls provided by the platform, and use these measures to restrict information that is shared with the public. In other words, they are left to their own devices on what to share and how to share it. This privacy-by-consent model leaves the burden to protect users’ data to the users. Each user has to be their own privacy activist by figuring out privacy control features, and actively selecting not to share their information if that is what they want. They could change the default setting on their post under “Who can see my stuff?”.

Others suggested that the burden of protecting one’s privacy falls onto one’s own shoulders. Users should not fill out a “social media profile” to be a part of a platform, but provide only the bare minimum requirements to have an account. They were aware that important information such as banking numbers should not be put anywhere on social media. Some even turned on “private browsing” to prevent web browsers from saving certain information. To many users, not making personal information public is the only recourse. However, many felt that not sharing confidential information is enough.

Users repeatedly brought up the advertisement model that social media companies rely on for revenue[52]. Some expressed concerns over the amount of data that platforms collect from their daily activities, especially when it comes to locational information. As one respondent mentioned, “To me, it relates to my movements and decisions being tracked which can be used to stalk me. I understand that I accept for my data to be used to sell me ads etc, and it makes me uncomfortable, but I am more worried about my routines and movements being studied by a third party/stalked”. This respondent was concerned with the ability of platforms to surveil their locations, movements, and daily activities to generate incomes, or ‘surveillance capitalism’.[53] In an advertisement-driven ecosystem, it is in the interest of social media platforms to monitor every behavioural aspect of their users. Platforms use every piece of data about the consumer including demographic data, and data that consumers generated on the platform to build algorithms. In this model, data begets data, and data means more than what is being made visible to the public, and more than simple demographic and location data.

Once a user shares information on these platforms, it is difficult to withdraw it. As another respondent mentioned: “It’s difficult to find places to withdraw personal information. As an example, according to Facebook, if a user chooses to delete any photos and videos, they’ve previously shared on Facebook, those images will be removed from the site but could remain on Facebook’s servers. Some content can be deleted only if the user permanently deletes their account”. In other words, not only does data beget data, social media platforms also keep a complete history of every individual user who has created an account. The platform’s servers keep this history unless users leave the platform altogether.

What becomes clear from our interaction both in the survey and the subsequent focus group discussions, is that understanding of informational privacy and how it functions is a spectrum of ideas and notions. Depending on other factors like privacy literacy, or familiarity with technologies (used by social media platforms), an individual could either take a simplistic or minimalist outlook, or consider informational privacy to be an umbrella of protection against any excessive data collection or processing. In the following section, we will focus on the ToS documents, and specifically how these documents prevent consumers from exercising their privacy.

C. Privacy Literacy

Social Media platforms craft lengthy T&C documents that prevent most users including some of the most educated consumers from reading, and understanding them well enough to provide informed consent. These platforms exercise power of control through the lack of privacy literacy among the majority of consumers. This lack of privacy literacy shapes the ways in which consumers interact with platforms, and gives rise to platforms’ unilateral power towards choices, and the lack of responsibility to inform consumers. In an interview, Sneha,[54] a technologist who currently works in a computing lab shared:

Platforms like LinkedIn or Twitter and Facebook, I have actually never gone through the entire privacy policy documents. I click on “I accept” because it is tedious. To me it seems quite cumbersome to try to decipher the meaning of what they’re trying to say. They are full of technical/legal jargon. Although I am from a tech background, I feel it is not user friendly, and is quite long. It’s a big turnoff for the user. But for WhatsApp, when they updated the privacy policy, it was all in the news like there was a lot of chaos about that. I went on a Google search, and I came to know what changes they are making in not much of a time. It was easy for me. I would like to know the changes and also because WhatsApp has come under scrutiny. Lately, that is also one of the reasons that I’m worried about that, and the other platforms. I’m not so bothered as of now.[55]

Our interviewees mentioned that among the four platforms that we focused on including Facebook, LinkedIn, Twitter, and YouTube, they barely read the terms of service when signing up for the platforms. When there were changes in terms of service, they also did not pay attention to the various changes that the platforms tried to inform them about. However, many respondents during our interviews brought up the most recent changes (at the time of conducting the interviews in early 2021) in WhatsApp’s privacy policy as a case in point in educating the public about privacy, because the issue received a lot of media attention. An entire population was informed not only by the platform itself, but also by popular media about how the company’s policy changes might affect consumers’ choices. The extent to which privacy policy changes could affect not only consumer communications, but also their commercial activities and business activities was also highlighted. This additional effort from actors other than the company making the change boosted not only awareness about the impending change but also helped educate users about the notions of privacy and why they should care about it. Several respondents pointed out that they found such efforts to be useful and they propagated those messages through their own social networks.

Similar to Sneha, Deepak, a university student in Chandigarh shared:

(The) Privacy Policy is really long. Technical terms related to laws and stuff, which we cannot understand and I don’t think anyone can have time to go through all of that. That’s a big problem. I think they should consolidate to smaller quantities and make it easier for us to understand and right now you know as WhatsApp is really integrated into our society in India. We cannot just cut it off like other social media platforms, and we cannot even switch to other platforms. I think we should address that problem. I don’t have any solutions, but I think we should start looking at some of them.

Many users explained the importance of WhatsApp’s changes in privacy policy vis-a-vis other platforms that we asked. They pointed out that not only are ToSs too long to read, but they are also written in fonts that are too small for most common users. Accessibility is a serious problem. They are difficult to understand, and written mainly in English.[56] They are exclusionary by design.

Deepak told us about the general privacy policy for all social media platforms, and then he also addressed the issue that WhatsApp in comparison to others has become too integral in Indian social, political and commercial life. It has become a monopoly in terms of communications in India. Many could not simply “cut it off like other social media platforms,” or “even switch to other platforms.” These platforms have monopoly power in what they do, and have power in governing consumers’ choices. Users have no illusion of choices in what type of platforms they can choose to use.

In the context of India, many people rely on social media platforms not only for keeping in touch with friends and family members, but also to conduct business. Preeti, a master’s student in New Delhi, expressed her concerns over older users’ lack of familiarity with privacy using the WhatsApp controversy as a case in point:

“I think you have to keep in mind the user base of these platforms in India…when my parents who were with me in the room at that time when they looked at it (WhatsApp update), my father immediately accepted it, because he’s like my business is run through WhatsApp. I really don’t care what they want. As long as it’s free. They’re not really going to bother with reading what they’re asking and what they’re demanding and what’s happening if it’s especially like this gigantic text…there were better ways to approach it than to scare people into accepting and the only thing that actually stood out for me in that entire notification was that you do not accept your account will be discontinued…the way these platforms need to approach is how they would expand their business to consumers rather than to scare people into obedience.”

WhatsApp policy changes affect different people differently. People with higher privacy consciousness seem to be hesitant to accept new changes. Those who have to use WhatsApp to conduct businesses have no choice in this context. However, it has become an important aspect of communications infrastructure in India, on which the social, political and commercial life of Indian consumers operates. This means that even those who are privacy-conscious don’t have much choice and agency as they face a lack of options. Signal and Telegram have been suggested as more privacy-friendly options for Instant Messaging (“IM”) communication, but the large network effects in favour of WhatsApp keep people from switching even when the privacy policies are misaligned with their preferences.

D. Transparency

Users often compare the lack of a privacy law in India to the General Data Protection Regulation in Europe (GDPR).[57] They could recall instances where websites have to display disclaimers on a European website about the rights of users, and what type of information companies are collecting from them. The borderless nature of the internet provides them with opportunities to gauge how transparency requirements set out in law or by the government facilitate consumers to become more aware of their rights. They feel emboldened, and empowered when knowing that a digital newspaper located in Europe informed them of what data would be collected, and how. However, this shift of power only works if there is a policy framework that holds companies accountable, and at the same time provides explicit instructions about what companies have to disclose to consumers, and the public.

Preeti: “With GDPR when I say I go to The Guardian or any of these international news websites about 50% of my screen is covered with disclaimer about cookies so that is the most accessible way of telling the user that these are your options, this is how you exercise your right, and we urge you to do it if you want to proceed with this website and that push only came because there was an external law, asking them to declare like be open with the users…I think it’s an external control that is it’s the control from the government that needs to be more (sic) I think bite sized videos or simple Hindi explanations or local language explanations could do well in a country like India, where the user base is not necessarily educated in English.”

The top-down approach to protect users’ data rights in Europe certainly has a ‘policy diffusion’ effect.[58] It creates an awareness for Indian consumers that elsewhere, users’ privacy rights are protected by the government. However, the example regarding The Guardian is a limited one since most digital companies would conduct a cost-benefit analysis to evaluate if they should extend consumers’ protection mechanisms from one nation-state to another. In other words, how Twitter governs consumers’ data would be very different in Europe and India, since there are different laws regarding privacy rights and data protection in both countries. If the cost to change the platforms’ interface outweighs the benefits from data collection as in The Guardian’s case, where they are not driven by the same profit motives as a social media platform, Indian consumers can also enjoy certain rights and privileges stated in the GDPR. However, if the benefits of tailoring the platform and collecting more data outweighs the cost of implementing that customisation, platforms like Twitter would simply collect Indian consumers data and store them outside of India, which it would not do so for European consumers. In such cases, strong privacy laws that are unambiguous in what controls need to be put in place are the way to go, otherwise market forces will naturally nudge companies towards implementations that best serve their own needs.

E. Mobile Phone Privacy

The majority of our survey takers responded that they access social media platforms via mobile phone apps. Mobile phone privacy and security policies might be different from what consumers would experience when they use a web browser. Some consumers were highly concerned about how intrusive apps could be. Amrita, a middle-aged housewife expressed her concerns over the ambiguity around cell phone data collection:

“When I install some bank applications, or any new app I try to install first will ask me to access the phone. I don’t know what the app wants, or to what extent the app is going to read other data. Everyone is not a software professional, they don’t know where the program is going and what they are doing.”

Apps ask to access consumers’ contacts from their mobile phones, access the phone’s camera, and track consumers’ locations. Our interviewees raised various concerns over the differential nature of privacy when it comes to cell phones, and the app ecosystem. Kashvi, an assistant professor of computer science in Chandigarh shared:

“All these apps whether it’s WhatsApp or Facebook or Instagram (sic), they are sharing information with each other, they are getting our every information. Privacy controls, a few of the privacy controls are with us there’s no doubt about it, but still all these apps are when we install them on our device, they ask us for our location our media our camera and even Facebook shouldn’t require location, but why it is asking for it right. They should mention that they are accessing all parts of our mobile devices. They should mention this fact when we use that particular app.”

Social media apps are quite intrusive. They do ask users whether they could access certain locations in their cell phones. However, most users are not aware of what was collected, and what was not collected, and accessed. Kashvi went on further to suggest that platforms must make these points clear on their website about how users’ privacy is different when they use an app, and when they use a web browser. Informing a user’s rights and privileges via a small cell phone screen is insufficient. People would not be able to read, and comprehend the level of access these apps have on each consumer’s device. As mobile communication has become a structured part of society,[59] there is a need for further examination of how users exercise and perceive their privacy mediated through mobile devices.

Our participants overwhelmingly discussed the importance of considering privacy within the app ecosystem. This was a surprising finding for us because our original research plan was to focus on general privacy, yet during the interviews, participants emphasised that online privacy through apps and cell phones worked and felt differently for them. This particular finding is noteworthy because India’s internet users currently ranked second in the world with over 483 million users in 2018, of whom 390 million users accessed the internet via their mobile phones.[60] Furthermore, the app ecosystem is a contentious space where privacy of consumers is regulated by a few multinational companies such as Google and Apple, who own the distribution channels for these apps.

Mobile apps that are installed through app stores maintained by Google for Android and Apple for iOS come with some additional requirements in terms of how personal data and consent is processed. The recent update from Apple[61] requires more information from the developers of the apps for the user so that they can undertake informed choices. Privacy protections are much stronger in the case of Apple’s ecosystem compared to that of Google’s ecosystem where apps are much less strongly regulated. Thus, an analysis of apps as against accessing social media platforms through web browsers poses slightly different concerns, including access to contacts on devices, storage, messages, GPS, camera, microphone, and other elements which can further complicate both the user’s understanding of privacy and the effectiveness of their informed consent.

A case in point is the dispute between Apple and Facebook regarding how the Facebook app collects data through consumers’ cell phones, which is not required to run the app. Apple’s pro-privacy stance is only enabled by its monopoly on its devices, while Facebook tries to make the case that Apple’s privacy policy hurts small businesses, and app developers.[62] Privacy is an industry issue in this case, and it is up to corporations to decide whether users’ data is still up for grabs, and whether they have a say when the two monopolistic powers actually craft policies regarding users’ personal data protection.

F. Role of Government Oversight

When we inquired from our respondents about what governmental measures should be undertaken to protect user privacy online, there appeared to be two main ideas – first, a faster and more expeditious grievance redressal mechanism, and second, an independent oversight or regulatory body.

Amrita: “If somebody becomes a victim or something happens the government should have a forum. People can pay some nominal fees, and this office can do something. The action should be quick. Unwanted incidents that should be addressed in a swift manner, it should not be like that if you go there the cyber police station cyber police station will say okay get the letter from back this is the thing happening.”

Karthik: “Platforms like WhatsApp need a separate set of regulation, which may not be directly applicable to platforms, such as Facebook or Instagram so the requirement of regulation has to be made much more. Technocrats to handle the portfolio…(sic) it is not important what’s important is who are the people who are putting (the) system in place, what are, what are the kind of people on the ground who will be dealing with each of these individual platforms, so that is off the cuff that’s what I can think of.”

It became abundantly clear that our respondents wanted the government(s) to step in with better regulatory measures. Common suggestions featured standalone regulators for tech companies, faster adjudication in courts, or specialised courts, and guidance from concerned ministries and governmental departments around how social media platforms need to be more accountable. The role that the state and its agencies can play in bettering oversight and regulation is discussed in more detail in the recommendations subsequently.

IV. DISCUSSION AND CONCLUSION

Our empirical research both through the survey as well as the focus groups, showed that some broad overarching issues were commonly emerging in user experiences and perspectives. These circled around privacy literacy, differences in experiences, need for more robust governmental frameworks for regulation and adjudication, among others. For instance, in addition to the platform design changes, there is also the equally important notion of public education and awareness building that will be crucial in the success of the above changes achieving their intended effects. Furthermore, almost 40 percent of the surveyed users felt it imperative to make privacy policies and T&Cs more lucid, by re-designing privacy rights and regulations presentations.[63] [64]

From a policy and governance perspective, our focus groups evidently revealed that users are quite dissatisfied with the sluggishness, or in many cases, the lack of any efficacious grievance redressal mechanism(s). There was also a strong underlying sentiment of not condoning ineffective self-regulation, but instead implementing robust regulatory measures through the state’s agencies, or an independent regulator.

While the Indian Parliament debates the PDP Bill, there is a necessity for some interim steps that need to be implemented. This section will highlight actionable inputs which may be adopted by government(s) from a regulatory standpoint, and some internal mechanisms that may be considered by social media platforms to alleviate the concerns raised by their users in India.

A. Measures to be Implemented by Governments and Sectoral Regulators

Apt government action is needed to restore agency over personal information back to the users. The most obvious first step is to ensure that a user comprehends the manner in which her data will be collected, stored and shared. For all the controversy that the WhatsApp incident triggered, it showcased how true informed consent actually plays out. When people were given a lucid exposition of the privacy policy changes which they deemed unacceptable, it translated into palpable public opposition, including even migrating to other mobile messaging services like Signal and Telegram, though to what extent that happens remains to be seen, a consequence of the privacy paradox. Also, as mentioned previously, the strong network effects of the WhatsApp platform in the Indian context limit mass migration away from the platform even with misaligned views on privacy.

The WhatsApp incident can therefore, serve as an inadvertent yet insightful specimen on how the government can regulate social media platforms cajoling them to be more transparent and comprehensible in their T&Cs. These should include:

1. Expanding the general right to explanation – The government should consider setting out a legislative mandate requiring social media platforms to create clear and lucid versions of their T&Cs, and establishing interfaces which allow users to interact and get a better understanding of the governing rules of the platform. This could emanate from an extension of the broader right to explanation that is currently at the heart of significant law and policy debates.[65] Right to explanation, simply, establishes the need for clarity and lucidity in technological processes, for people who are subject to such processes. Included in this right is the necessity for users to have a comprehensible understanding of the ToS of any social media platform they use. The right to explanation in this case would arguably be a more refined and contemporaneous manifestation of “informed consent” which has been deemedas one of the building blocks for securing individual informational privacy. The other side of this coin is that an extension of the right to explanation warrants better disclosures about how individual data is collected, stored, and shared or utilised (both by the social media platform, or through licensed access, by unknown third-parties). The objective in both these elements is to reinforce the agency of social media users, which has been held as sine qua non for the right to privacy under Article 21.

2. Greater accountability for social media intermediaries – Many of our survey takers and participants of the focus groups, voiced the need for more governmental oversight of social media platforms. Despite also stating their lack of complete trust in the government’s ability to regulate this space, there was a strong consensus on their need to develop better accountability measures through some form of regulation. The recently enacted IT (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021, to some extent have brought greater oversight on social media platforms. While the content of these rules is in question, and has also been challenged before several state high courts, its stated intent to bring greater accountability to social media platforms is not severely disputed.[66] The heart of the debate lies in the balancing act of regulation versus individual rights and liberties exercised on these platforms. In some limited sense, it can be argued that the rules display an intention for better regulation and accountability, but perhaps require more refinement in terms of its content.

3. Better regulation of social media platforms – As discussed, our respondents mostly advocated the government to step in with more effective regulation of social media platforms. Governments have been considering different regulatory options with respect to social media platforms, and their exploitation of the personal data of users.[67] In India too, the PDP Bill manifests the government’s intent of bringing such platforms within a regulated data governance regime. The PDP Bill, for instance, proposes the establishment of a Data Protection Authority (“DPA”), which will be responsible for safeguarding interests of data principals, preventing misuse of data, and ensuring compliance with the next law’s mandate.[68] Like other sectoral regulators, this proposed DPA is to comprise domain experts with extensive experience in the fields of data protection, information technology, data management, cyber and internet laws, etc.[69] The proposed PDP Bill therefore appears to check some important boxes from a regulatory perspective.

4. Consider setting up specialised courts for addressing privacy issues – A common theme that featured in almost all of our focus group sessions was the need for an organised sectoral regulator to reign in and bring checks and balances to the social media platforms. The proposed Data Protection Authority will largely satisfy the requirement of a sector regulator, ensuring compliance with the PDP Bill’s legal mandate.[70] However, in addition to the regulatory oversight, it might be worthwhile considering the establishment of specialised cyber courts to address issues, including privacy related problems, emanating from the internet. The existing internet governance laws could be amended to establish specialised adjudicators for cases involving privacy breaches, with specialised procedures. As a caveat, it must be added that each of these options has its own set of logistical challenges which must be examined separately in research and impact evaluation studies, to determine maximum feasibility.

5. Legislative amendments – What becomes somewhat evident is the need for interim legislative measures, while the PDP Bill is being debated by Parliament. It is pertinent to mention that the PDP Bill will remedy some of the existing deficiencies to some extent; however, the government should consider using short-term measures which may be adopted through the Information Technology Act, 2000,[71] the overarching statute governing the online ecosystem in India, including intermediaries like social media platforms. Crucially, recognising rights of social media (and other online) users, including the aforementioned expanded right to explainability could be effectuated through this process.[72] Such a recognition of online rights could facilitate industry standards for adopting simple and plain language display of T&Cs, periodic reminders of the same, prompt alerts on updates, internal grievance redressal measures for individual complaints, clear disclosure of third-parties accessing personal data of users and allowing for opt-outs from such arrangements without prejudicing the service delivery of the platform, etc.

B. Measures for Social Media Platforms

In addition to the government taking legislative steps, the social media platforms can also improve their internal mechanisms and the user interface to allow better access to and understanding of their T&Cs and privacy policies. These could include:

1. Transparent internal grievance redressal – Social media platforms could, within their own oversight frameworks, establish grievance redressal mechanisms. Facebook’s content oversight board is an experimental specimen of how internal grievance redressal can be better established in an institutional and independent format.[73] This, however, comes with the caveat in terms of limited success because of the setup and how recommendations from this Board are incorporated by Facebook. In addition, for any self-governance mechanisms, there is always a consideration on behalf of the organisation as to whether the costs of setting it up and keeping it running brings them adequate benefits, especially when it is not a requirement.

Secondly, online dispute resolution (“ODR”) may be considered to set up virtual grievance redressal forums, or an online ombudsman. Akin to flexible dispute resolution techniques like mediation and arbitration, ODR has the added benefit of allowing virtual dispute resolution, and is gaining traction both in India, and abroad.[74]

2. Interactive technologies to ease and incentivise the dissemination of T&Cs – In terms of user interface, it can be argued that a lot of room for improvement can be done to aid the simpler dissemination of T&Cs and privacy policies. Instead of relying on T&Cs as legal documents to potentially indemnify themselves from any prospective legal action, these policies should be drastically redrafted keeping the user at its centre. We realise that such an action is unlikely to come from the bona fides of social media companies alone and reiterate the need for requisite legislation to incentivise and mandate such practices across the industry.

C. Recommendations for the Research Community

Furthermore, to build on the existing research on data protection on social media platforms, there are numerous additional questions that are raised through our work in investigating the privacy paradox in the Indian context. As we have sought to understand how the privacy paradox manifests in ways that are substantially different from current investigations in other parts of the world, we’ve also realised that work such as this needs to be expanded even further to gain a more comprehensive understanding that is culturally and contextually sensitive.

Some future research directions that we will embark on, building upon the findings of this study include a deeper exploration of the nuances of UI design that has data-driven, quantitative measures that help to benchmark the evolution of the complexities in the interface design and how that interacts with the perception and agency of users when it comes to informational privacy. In addition, we also believe that a more detailed analysis of the ToS and privacy policies can be tied to the UI design findings which can unearth more granular trends in terms of the evolution of agency and exercise of privacy controls on the part of the users. Finally, a cross-country analysis of this data will help to target further the interventions and changes made by the platforms so that the effectiveness of these measures goes up and improves the state of the information ecosystem writ large.

Appendix A: Survey – Understanding Privacy Preferences on Social Media in India

Privacy on Social Media has increasingly become an important issue for participation on the Internet. This survey aims to collect information on social media users’ privacy preferences. Our four platforms of focus are Facebook, YouTube, LinkedIn, and Twitter.

The answers to this survey will not be shared outside of the research team and will be destroyed upon completion of the study. To know more, please refer to our privacy policy here: https://ai-ethics.github.io/privacy-policy-complexities/.

Pre-Survey Question

1. What does information privacy mean to you?

First, we want to know about your reasons to join Social Media.

2. For what reasons did you join Facebook, LinkedIn, Twitter or YouTube? Select all that apply. *. (Multiselect-Grid)

a. To feel empowered (being a part of a network/community)

b. To keep up with friends and family

c. To get updated about social events and issues

d. To gain valuable information about jobs, studies, and other opportunities

e. To participate in commerce (e.g., setting up an online presence for your business)

f. To reach out to potential customers, partners, new audience

g. To get entertainment

h. Other:

3. How do you access Facebook, LinkedIn, Twitter, YouTube? * (multiselect)

a. Via a web browser

b. Via a downloaded app on my desktop or laptop computer

c. Via a downloaded app on my mobile device

4. How often do you access these platforms? * (single select)

a. Never

b. Once or Twice a Year

c. Once or Twice a Month

d. Once or Twice a Week

e. Almost Daily

Privacy Preferences: We’d like to know your point of view about your views, and your privacy preferences on these four platforms: Facebook, YouTube, LinkedIn, and Twitter

5. How would you describe whether the Facebook platform helps you with your goals as you selected on the first page? (single select for each response: Not helpful, Somewhat helpful, Helpful, Very helpful, N/A)

a. To create a larger network

b. To keep up with friends and family

c. To get updated about social events, issues

d. To gain valuable information about jobs, studies, and other opportunities

e. To participate in commerce (e.g., setting up an online presence for your business)

f. To reach out to potential customers, partners, new audience

g. To get entertainment

h. To feel part of a community

6. Can you say a bit about your ratings? Why or why not is Facebook helpful to you?

7. How would you describe whether the YouTube platform helps you with your goals? (single select for each response: Not helpful, Somewhat helpful, Helpful, Very helpful, N/A)

a. To create a larger network

b. To keep up with friends and family

c. To get updated about social events, issues

d. To gain valuable information about jobs, studies, and other opportunities

e. To participate in commerce (e.g., setting up an online presence for your business)

f. To reach out to potential customers, partners, new audience

g. To get entertainment

h. To feel part of a community

8. Can you say a bit about your ratings? Why or why not is YouTube helpful to you?

9. How would you describe whether the LinkedIn platform helps you with your goals? (single select for each response: Not helpful, Somewhat helpful, Helpful, Very helpful, N/A)

a. To create a larger network

b. To keep up with friends and family

c. To get updated about social events, issues

d. To gain valuable information about jobs, studies, and other opportunities

e. To participate in commerce (e.g., setting up an online presence for your business)

f. To reach out to potential customers, partners, new audience

g. To get entertainment

h. To feel part of a community

10. Can you say a bit about your ratings? Why or why not is LinkedIn helpful to you?

11. How would you describe whether Twitter platform helps you with your goals? (single select for each response: Not helpful, Somewhat helpful, Helpful, Very helpful, N/A)

a. To create a larger network

b. To keep up with friends and family

c. To get updated about social events, issues

d. To gain valuable information about jobs, studies, and other opportunities

e. To participate in commerce (e.g., setting up an online presence for your business)

f. To reach out to potential customers, partners, new audience

g. To get entertainment

h. To feel part of a community

12. Can you say a bit about your ratings? Why or why not is Twitter helpful to you?

Privacy information:

13. How would you describe whether the platforms make their privacy policies legible and accessible to you? (multiple choice grid)

a. Terms of Service have clear privacy policies

b. Privacy policies are easily understandable

c. Privacy policies are difficult to find

d. Privacy language is obscure

e. Personal Information is readily sharable

f. It’s difficult to find places to withdraw person information

g. It takes too much time to understand complicated privacy policies

14. Are you interested in controlling the privacy of your information on these platforms? (Grid with all the platforms and the following options for each of them)

a. Yes

b. No

15. Have you read through the Terms and Conditions (T&C) for the platform? (Grid with all the platforms and the following options for each of them)

a. Yes

b. No

c. Don’t remember

16. If you answered yes to the above, how easy did you find the language to read? (Grid with all the platforms and the following options for each of them)

a. Difficult to understand

b. Easy to understand

17. What could the platform have done to make the language of the T&C easier to understand? (long form)

18. Have you interacted with the privacy settings on these platforms? (Grid with all the platforms and the following options for each of them)

a. Yes

b. No

c. Don’t remember

19. Have you utilised any of the privacy controls provided to you by the platform? (Grid with all the platforms and the following options for each of them)

a. Yes

b. No

c. Don’t remember

20. What has your experience been interacting with the privacy controls of the platform? (Grid with all the platforms and the following options for each of them)

a. Difficult to navigate

b. Easy to navigate

21. Do you feel that the privacy controls offered by the platform gave you meaningful control? (Grid with all the platforms and the following options for each of them)

a. Yes

b. No

22. Did the provided privacy controls meet your expectations of privacy from the platform? (Grid with all the platforms and the following options for each of them)

a. Yes

b. No

23. Please specify anything here that you would like to share about your experience interacting with these privacy controls (long form)

24. What could the platform have done to improve your experience in terms of privacy controls? (long form)

25. Which would you give first preference in terms of improvements for privacy by the platform? (Grid with all the platforms and the following options for each of them)

a. Language of the T&C

b. Privacy controls accessibility

Background Information

We also ask some optional background and demographic questions. This information will be used to ensure that perspectives from a diverse group of participants are heard and recorded.

26. How old are you? (single select)

a. Younger than 17

b. 18-34

c. 35-49

d. 50-64

e. 65+

27. What is the highest level of education you have completed or are in the process of completing? (single select)

a. High School

b. Some College Experience

c. Bachelor’s Degree

d. Master’s Degree

e. Professional Degrees

f. PhD

28. What is your occupation? (short answer)

29. What is your gender? (multi select)

a. Female

b. Male

c. Other (Please specify):

30. What is the PINCODE in India where you have lived for the longest period of your life? (short text)

31. What is the PINCODE in India where you currently live? (short text)

Further participation in the research

We are looking to interview participants of this survey. If you are interested in expressing our opinions about information privacy policies and discourses in India, please let us know. The interview should last less than an hour via Zoom/ Skype or phone call. Participants will receive a small amount of compensation.

32. Would you be open to being contacted for a follow-up interview about topics that come up from this survey? (single select)

a. Yes

b. No

If you answered “yes” to any of the above, please provide your name and an e-mail address where we may contact you: (open end)

- Tarleton Gillespie. (2018) Custodians of the Internet. Yale University Press. ↵

- Amanda Siberling. (2021, November 10). Facebook will no longer allow advertisers to target political beliefs, religion, sexual orientation. TechCrunch. <https://techcrunch.com/2021/11/09/facebook-will-no-longer-allow-advertisers-to-target-political-beliefs-religion-sexual orientation>. ↵

- (2017) 10 SCC 1 ↵

- See Puttaswamy judgment id., especially para 142 (plurality opinion of Justice Chandrachud). See also Vrinda Bhandari et. al. (2017). An Analysis of Puttaswamy: The Supreme Court's Privacy Verdict. IndraStra Global, 11, 1-5. <https://nbn-resolving.org/urn:nbn:de:0168-ssoar-54766-2.> ↵

- Vrinda Bhandari and Renuka Sane. (2018). Protecting Citizens from the State Post Puttaswamy: Analysing the Privacy Implications of the Justice Srikrishna Committee Report and the Data Protection Bill, 2018. Socio-Legal Review 14 (2) ,143-169 ↵

- Personal Data Protection Bill, 2019, <http://164.100.47.4/BillsTexts/LSBillTexts/Asintroduced/373_2019_LS_Eng.pdf.> ↵

- Mayank Jain. (2017). Government sets up committee to study data protection, tasks it with framing a draft law. Scroll.in, <https://scroll.in/latest/845753/government-sets-up-committee-to-address-data-protection-tasks-it-with-framing-a-dr aft-law.> ↵

- Danah Boyd and Kate Crawford. (2011). Six Provocations for Big Data. Presented at the Oxford Internet Institute, A Decade in Internet Time: Symposium on the Dynamics of the Internet and Society, 21 September. ↵

- PDP Bill, supra 4. See draft sections 7(issuing notice) and 11 (consent) ↵